What was behind Friday’s National Grid outage? Network theory, not conspiracy

National Grid is getting a kicking in the aftermath of last Friday’s electricity blackout.

Potential explanations swirl around both social and mainstream media. The system cannot cope with too much wind-generated electricity. The Russians hacked into the computers.

A puzzling aspect is that the initial shock to the National Grid was a very small one. The gas-fired station at Little Barford in Bedfordshire went down. Within minutes, a massive power outage had taken place.

Rebecca Long-Bailey, Labour’s energy secretary, has honed in on this. The fact that a small outage had such huge consequences is, to her, clear evidence of under-investment, and makes the case for public ownership. But scientific advances over the past 20 years provide a quite different perspective.

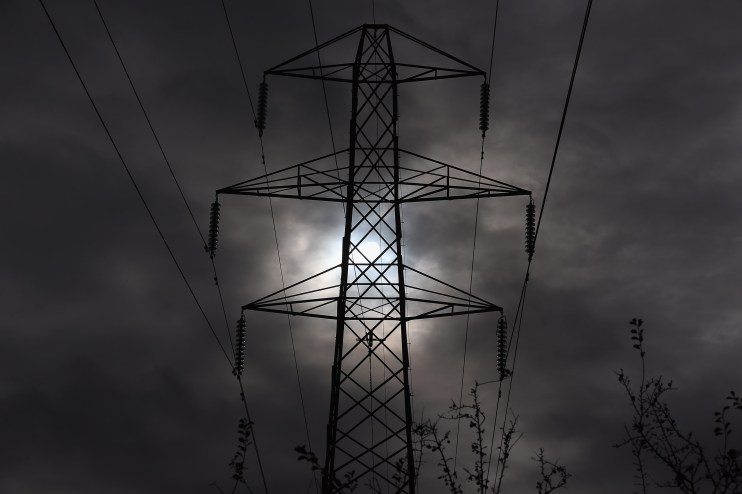

The National Grid is, by definition, a network. Power stations receive supplies from various sources, and then the energy is transmitted from them to businesses and households via power lines.

A key discovery in the maths of how things spread across networks is that in any networked system, any shock, no matter how small, has the potential to create a cascade across the system as a whole.

Duncan Watts was at Columbia University when he published a groundbreaking paper on this in 2002 with the austere title “A simple model of global cascades on random networks”. He was subsequently snapped up by first Yahoo, then Microsoft.

Watts set up a highly abstract model of nodes, all of equal importance, connected on a network. Initially, all of these were, putting it into the Grid context, working well. He investigated the consequences of what happens when a very small number of them malfunctioned.

The results were surprising. Most of the time, the shocks – made deliberately small by assumption – were contained and the network continued to function well. But occasionally, a small shock triggered a system-wide collapse.

Watts coined the phrase “robust yet fragile” to describe this phenomenon. Most of the time, a network is robust when it is given a small shock. But a shock of the same size can, from time to time, percolate through the system.

In the mid-2000s, the academic Rich Colbaugh was commissioned by the Department of Defense to look into the US power grid.

The physical connectivity of the network had increased substantially due to advances in communication and control technology.

The total number of outages had fallen – when one plant failed, it was easier to activate a back-up. But the frequency of very large outages, while still rare, had increased.

I collaborated with Colbaugh on this seeming paradox. We showed that it is in fact an inherent property of networked systems. Increasing the number of connections causes an improvement in the performance of the system, yet at the same time, it makes it more vulnerable to catastrophic failures on a system-wide scale.

There may still prove to be a simple explanation of the sort loved by decision-makers the world over. But the science of networks may shed more light than theories based on conspiracy and incompetence.

Main image credit: Getty