Tech firms needs to earn back public trust in order to innovate

Innovations in technologies such as biometrics, cyber security, and artificial intelligence stand to perform an unprecedented, positive role in society.

But their development means that we’re being asked to entrust more of our lives to technologies that we don’t fully understand, hand over our data to people we can’t see, and form relationships with businesses we don’t really know.

That’s a scary prospect, especially when some technologies are clearly falling short of the high levels of security, privacy, and ethical use that everyday consumers rightly expect. These range from small-scale scare stories like the talking toy doll hackable by anyone with a smartphone, to bigger horror tales such as in 2018, when a cyber security breach crippled four gas pipelines across the US.

What these examples show is how inexpensive, non-secure devices are becoming conduits for cyber criminals to gain access to important information. The pipelines were compromised through a video camera, and the doll was hacked through a $2 microphone.

With an anticipated 50bn internet-connected devices set to be in use by 2025 — ranging from watches and fridges to cars, lighting, and toothbrushes — the need to secure each link in this rapidly-expanding chain is more critical than ever before.

In the future, we’ll (reluctantly) tolerate our computers crashing every now and then, but we shouldn’t tolerate technologies crashing self-driving cars, compromising smart home security, and sharing details of our private lives with the rest of the world.

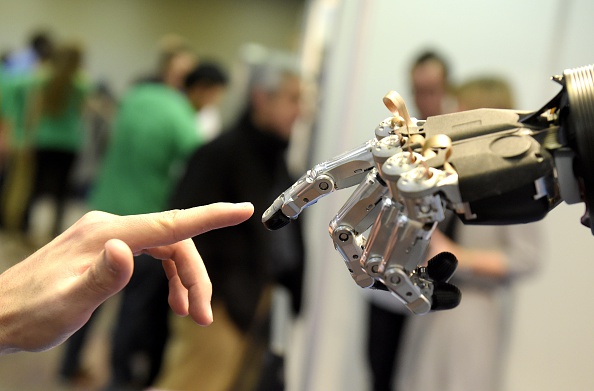

So when asking people to make a leap of faith and participate in a technology-led economy and society — to literally put their lives into the hands of a machine — all such solutions need to be infallible. They need to be intuitively trusted.

And building that trust — which in this case requires a “confident relationship with the unknown” — is arguably the single greatest challenge facing the technology industry today.

To that end, I see three key principles that must guide our collective thinking in order to help foster greater public confidence in technology as we continue to innovate.

Security by design

First, every connected entity needs to be secure, no matter how small or apparently insignificant, and operating within a secure network.

More importantly, responsibility for security lies with the producer. If a device can’t be patched remotely, it shouldn’t be connected.

Privacy by design

Personal data is the consumer’s possession, not a commodity. And that means we must empower individuals with information on how their data is used and how they can control it.

This principle of privacy by design also requires that organisations handling people’s data do so with the highest standards of security, transparency, and integrity.

The creation of user-centric digital identities — which allow you to prove your identity in the physical and digital realms, with the minimum amount of data exchanged, only with your consent and with parties you trust — could be the future.

Security must be a driver of consumer experience

Finally, the user experience needs to be both secure and convenient. Consumers want a quick and seamless experience, but they also want to know that the interaction is safe and secure.

Take the example of biometric authentication. With fingerprint and facial biometrics, we have replaced the password with the person. This, combined with behavioural biometrics and device ownership, has enabled multi-factor authentication and frictionless transactions, enhancing the consumer experience.

It’s no longer acceptable to “move fast and break things” — the Silicon Valley mantra that placed product development above all else. Instead, it is incumbent on all parties involved in the development, use, and application of technology — from policymakers to industry players — to build a cohesive environment where new tech can thrive and evolve, while still ensuring that the right safeguards are built into the way they are developed.

Sign up to City A.M.’s Midday Update newsletter, delivered to your inbox every lunchtime

Main image credit: Getty