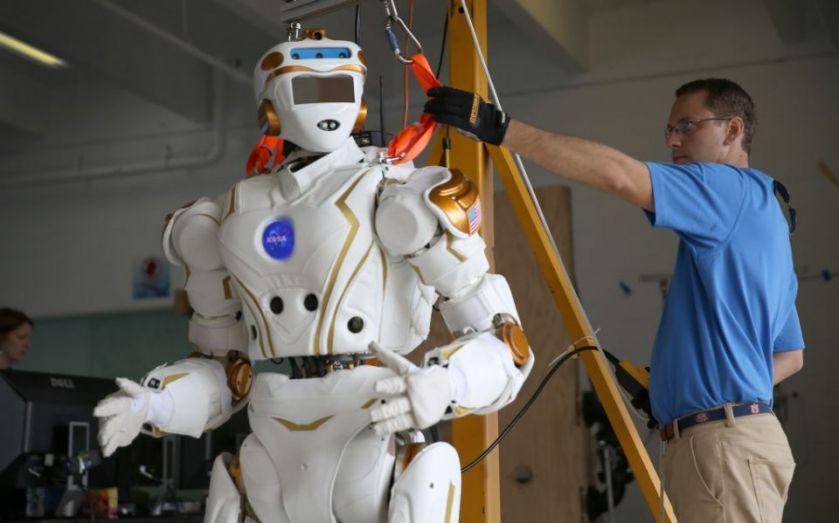

Humans will be at the mercy of US killer robots in a matter of years, scientist warns

There's no escape – robots capable of killing people without human intervention are on their way, and we will be utterly defenceless against them.

This is the warning issued by Stuart Russell, an Artificial Intelligence (AI) professor at the University of California, Berkley. He says the US military is currently developing robots called Lethal Autonomous Weapons Systems (LAWS), and these could be in full operation in just a few years.

“The AI and robotics communities face an important ethical decision: whether to support or oppose the development of LAWS,” he writes in Nature.

LAWS are considered to be the “third revolution” in warfare, even more dangerous than gunpowder and nuclear arms. There are clear benefits to using them – it means machines, rather than human beings, can be placed on the front line. But while some lives are saved by this method, many more will be put at serious risk.

“Autonomous weapons systems select and engage targets without human intervention; they become lethal when those targets include humans,” Russell continues.

At the moment, the US military is working on two LAWS projects. The first is the Fast Lightweight Autonomy (FLA), and this will involve programming tiny rotorcraft to move around unaided at high speed in urban areas and inside buildings. The other, Collaborative Operations in Denied Environment (CODE), will see teams of autonomous aerial vehicles carrying out “all steps of a strike mission – find, fix, track, target, engage, assess”.

But it's not just the US that's working to develop these machines – the UK and Israel are also developing their own LAWS, and other countries may well be pursuing projects in secret.

Protecting humans

The prospect of autonomous robots has attracted a lot of attention from world leaders. It was one of the topics discussed at Davos at the start of the year, and last month the UN held a conference in Geneva to discuss their future in combat.

Questions left to be answered include whether robots should be banned, and if not, the extent to which human control is necessary. Michael Møller, a top UN official, said the world has the opportunity to take “pre-emptive action” and ensure the “ultimate decision to end life remains firmly under human control”.

So how, legally, could they be stopped? International humanitarian law, which governs attacks on humans in times of war, has no provisions for robot autonomy. But the UN has the potential to limit of ban these weapons under the Convention on Certain Conventional Weapons.

If an international treaty is put in place within the next few years, as happened with blinding laser weapons 1995, we may have a lot less reason to worry about terrifying robots taking our lives.